Reinforcement learning is a powerful technique that allows AI agents to learn from their environment through trial and error, making it a key technology behind advancements like self-driving cars and game-playing algorithms.

It’s even more important now that Reinforcement Learning From Human Feedback (RLHF) is gaining traction with large language models like GPT-4, Claude, and Llama 3.

Finding the right reinforcement learning course can be overwhelming, especially with the abundance of options available online.

Many learners struggle to find a course that offers the right balance of depth, accessibility, and practical application.

It’s important to find a course that not only explains the concepts clearly but also allows you to put those concepts into practice through hands-on exercises and projects.

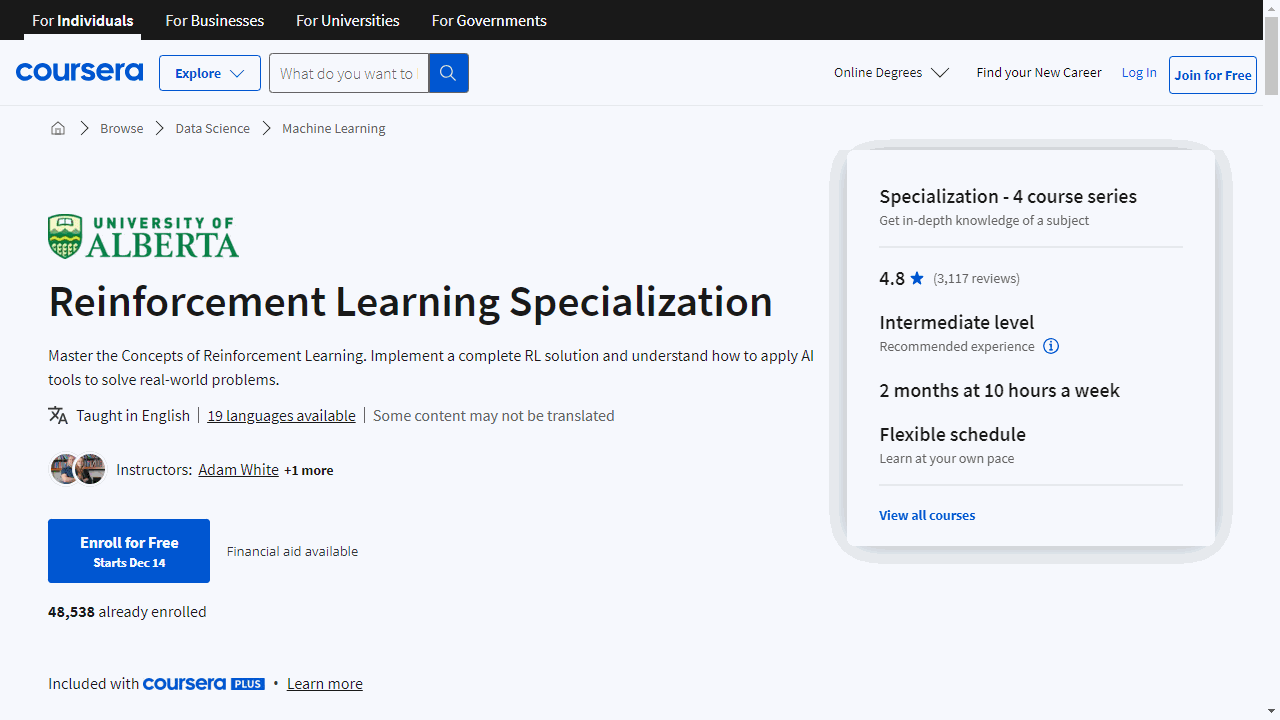

For the best reinforcement learning course overall on Coursera, I highly recommend “Reinforcement Learning Specialization” by the University of Alberta.

This specialization provides a deep dive into reinforcement learning, covering key algorithms like SARSA, neural networks, and policy gradient methods.

It’s praised for its comprehensive curriculum, engaging learning activities, and real-world applications.

University of Alberta has a strong reputation in the field of reinforcement learning, making this specialization a top choice for learners looking to master the subject.

But there’s more to discover than just the best overall.

I’ve curated a list of other top-rated reinforcement learning courses on Coursera, including options tailored for finance professionals, those seeking a comprehensive deep learning approach, and learners wanting to focus on trading strategies.

Keep reading to find the perfect course to take your reinforcement learning journey to the next level!

Reinforcement Learning Specialization

Provider: University of Alberta

This specialization is a direct path to proficiency in reinforcement learning, offering a deep understanding of AI’s automated decision-making.

If you’re ready to dive into algorithms like SARSA, neural networks, and policy gradient methods, this is the learning experience you’ve been looking for.

The starting point, “Fundamentals of Reinforcement Learning,” equips you with the essentials.

You’ll learn to frame problems using Markov Decision Processes and tackle the exploration/exploitation tradeoff, crucial for intelligent decision-making.

You’ll also gain practical experience with dynamic programming, a key technique for solving real-world control problems.

Moving on to “Sample-based Learning Methods,” you’ll delve into algorithms that learn optimal policies through trial and error, like Monte Carlo methods and Q-learning.

This course emphasizes the importance of exploration and introduces you to Dyna, an innovative approach that blends planning with learning for faster results.

In “Prediction and Control with Function Approximation,” you’ll address challenges in large or infinite state spaces.

Here, function approximation comes into play, allowing you to teach computers to generalize and maximize rewards.

You’ll also explore policy gradient methods, which enable direct policy learning without a value function.

This course requires a solid foundation in math, Python, and the principles covered in earlier courses.

The capstone, “A Complete Reinforcement Learning System,” is where you apply your knowledge to create a full-fledged RL solution.

You’ll make critical decisions on problem formulation, algorithm and parameter selection, and representation design, culminating in a project that simulates real-world RL applications.

Throughout the specialization, you’ll develop skills in AI, machine learning, intelligent systems, and more.

You’ll be hands-on, coding and implementing algorithms.

By the end, you’ll be prepared to apply reinforcement learning to practical problems with confidence.

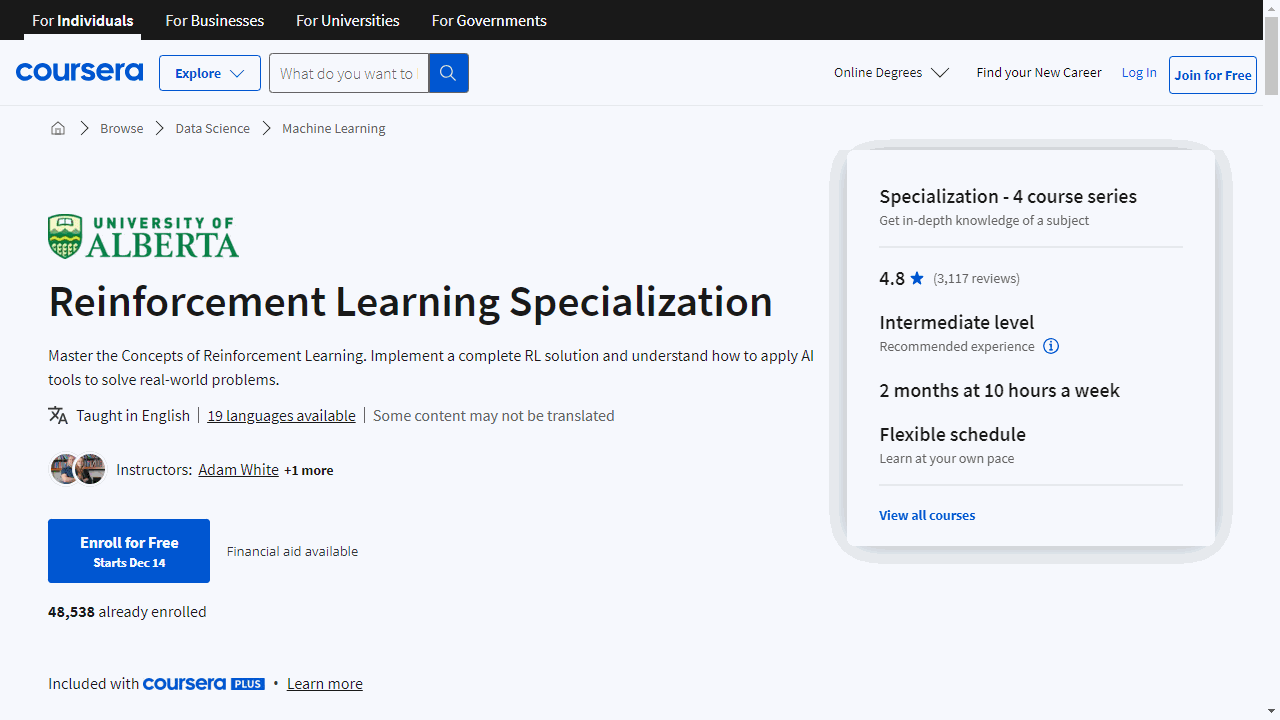

Machine Learning and Reinforcement Learning in Finance Specialization

Provider: New York University

This specialization is a practical journey into the intersection of machine learning and finance, offering a clear path from foundational concepts to advanced applications.

Kick off with the “Guided Tour of Machine Learning in Finance,” where you’ll grasp the essentials of ML and its financial applications.

If you’re a finance professional, a day trader, or a student in a related field, this course is your gateway.

You’ll need familiarity with Python, linear algebra, and basic calculus to tackle the assignments.

Progress to the “Fundamentals of Machine Learning in Finance” to deepen your understanding of supervised, unsupervised, and reinforcement learning.

You’ll get to grips with Python’s ML tools and apply unsupervised learning to a portfolio trading strategy.

This course builds on the first, sharpening your problem-solving skills.

The third course, “Reinforcement Learning in Finance,” is where your skills start to pay off.

You’ll delve into RL’s core concepts and apply them to finance’s big questions like portfolio optimization and option pricing.

Get ready to engage with Q-learning and model market dynamics.

Prior completion of the first two courses will set you up for success here.

Conclude with the “Overview of Advanced Methods of Reinforcement Learning in Finance,” where you’ll explore the advanced territory, including the links between RL, option pricing, and physics.

You’ll also discover how to apply RL to high-frequency trading and cryptocurrencies.

This course will leave you equipped to discuss complex financial concepts and apply RL to diverse financial scenarios.

Throughout this specialization, you’ll develop skills in Python, including Jupyter notebooks, and master key concepts such as risk management and trading strategies.

You’ll also touch on the forefront of finance, including cryptocurrencies and peer-to-peer lending.

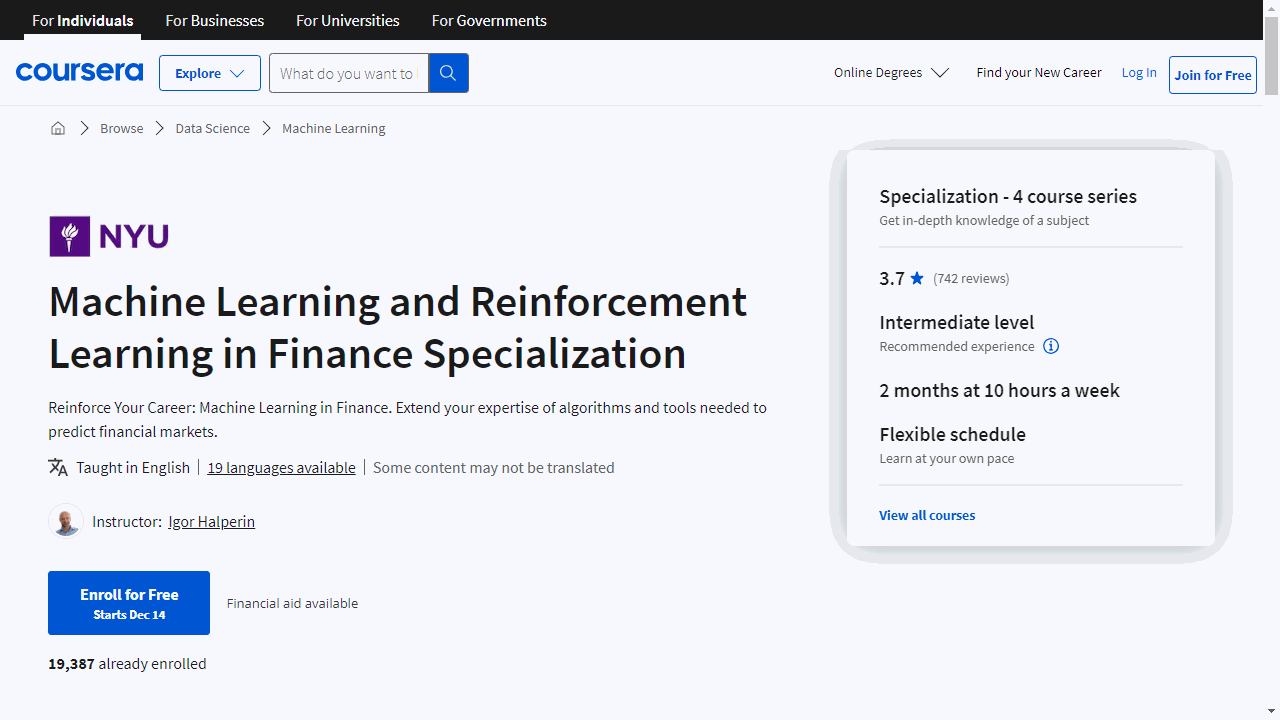

Unsupervised Learning, Recommenders, Reinforcement Learning

Provider: DeepLearning.AI

This is a comprehensive program that gives you a solid understanding of unsupervised learning, the mechanics behind recommender systems, and the principles of reinforcement learning.

You’ll start with unsupervised learning, where you’ll master clustering through the K-means algorithm.

This technique is essential for grouping data without predefined labels, like sorting different types of fruit without knowing their names.

You’ll also learn to identify outliers in data using anomaly detection, which is crucial for recognizing patterns that don’t fit the norm.

The course then guides you through the intricacies of recommender systems.

You’ll understand collaborative filtering, where algorithms predict your preferences based on others’ likes and dislikes.

You’ll also delve into content-based filtering, which suggests items similar to what you’ve enjoyed before.

Practical TensorFlow exercises will help you apply these concepts in real-world scenarios.

When it comes to reinforcement learning, the course shines by breaking down complex ideas into understandable chunks.

You’ll explore how to develop policies that guide decision-making processes, akin to teaching a robot how to navigate a new planet.

The Bellman Equation will become a tool in your arsenal, helping you to optimize these decisions.

You’ll also refine algorithms with techniques like ε-greedy policy and mini-batch updates, enhancing the learning capabilities of AI agents.

Throughout the course, optional labs offer hands-on experience, and insights from AI pioneers Andrew Ng and Chelsea Finn provide inspiration and context.

If you’re eager to share your knowledge, there’s even an opportunity to mentor fellow learners.

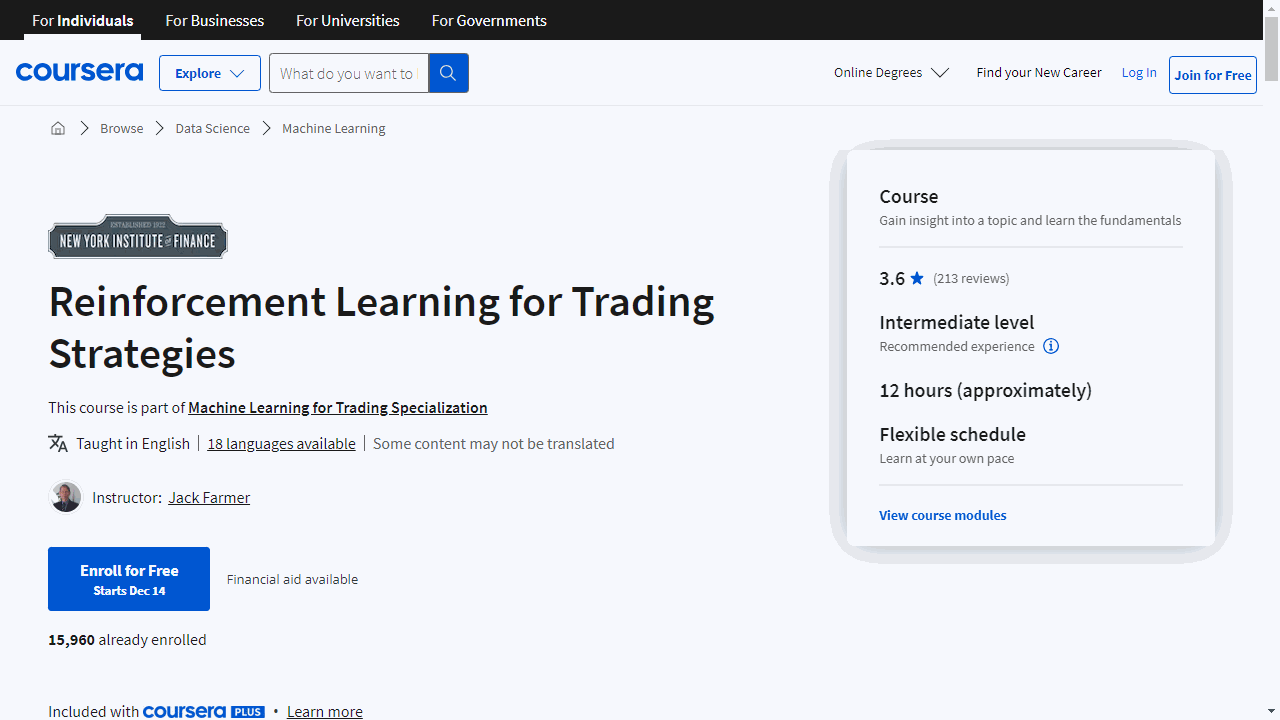

Reinforcement Learning for Trading Strategies

Provider: New York Institute of Finance

You’ll start with the basics of reinforcement learning, understanding its evolution and how it applies to trading decisions through methods like value iteration and TD learning.

You’ll then delve into Q learning, a pivotal technique that teaches computers to learn from their actions, enhancing your trading strategy’s efficiency and performance.

The course also gives you hands-on experience with Qwiklabs, where you can apply these concepts to real-world trading scenarios.

The curriculum covers the intricacies of electronic trading and data-driven learning, drawing lessons from TD-Gammon, a program that mastered backgammon through reinforcement learning.

You’ll also tackle Deep Q Networks, learning to manage loss, memory, and even code.

Policy gradients and actor-critic methods are next, offering strategies akin to having a personal coach for your trading bot.

You’ll also master LSTM networks, which are crucial for predicting market trends based on historical data.

As you advance, the course guides you through developing a dynamic reinforcement learning trading system, complete with steps and checks to ensure it’s ready for the market.

Risk management is a key focus, teaching you to mitigate investment and trading risks and reduce portfolio volatility.

Finally, the course introduces AutoML, an innovative tool that automates machine learning model creation, applicable to vision, NLP, and tables, revolutionizing your financial strategies.

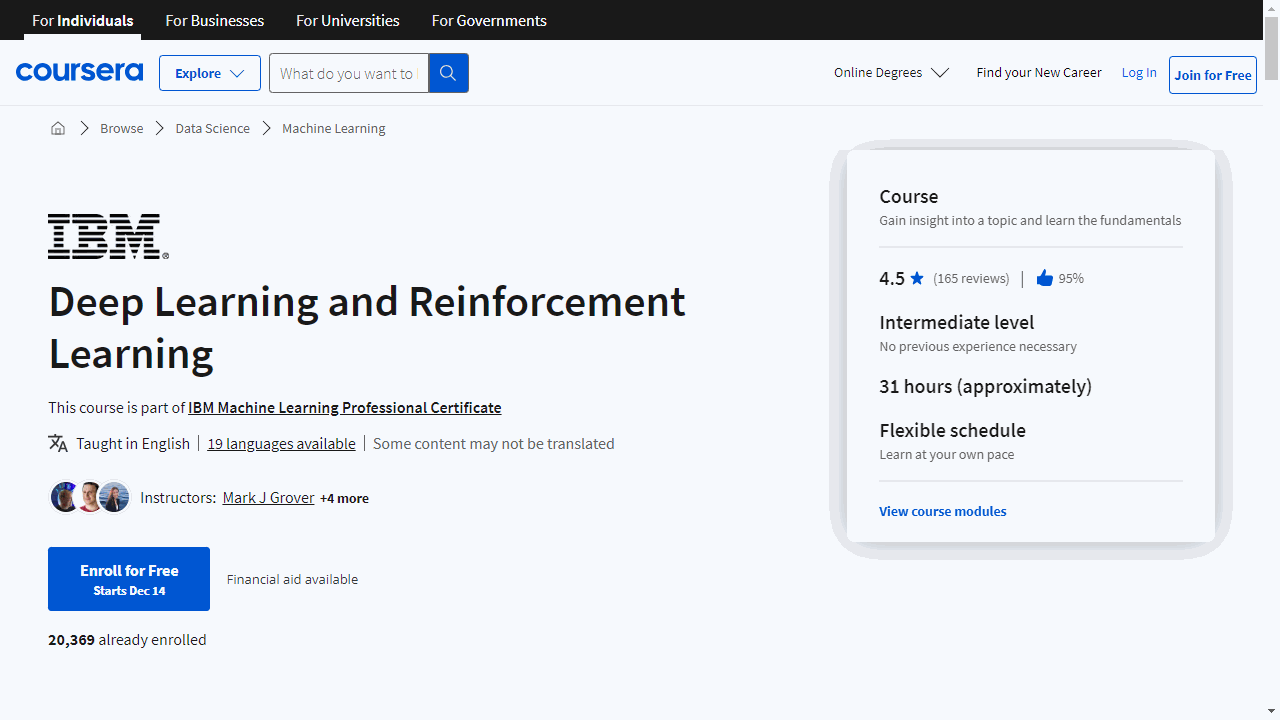

Deep Learning and Reinforcement Learning

Provider: IBM

This is a comprehensive program that guides you from the fundamentals of neural networks to the complexities of reinforcement learning, all with a hands-on approach.

You’ll begin with neural network basics, using Sklearn to understand neurons and forward propagation.

The course simplifies these concepts with matrix representation, making it easier for you to grasp the mechanics of neural information flow.

Gradient descent, the process of fine-tuning your network, is covered in detail.

You’ll compare various methods to optimize your learning model, with optional notebooks available for a deeper dive.

Training a neural network is next, where you’ll master backpropagation—the technique networks use to learn from errors.

You’ll also explore different activation functions, including the sigmoid, to determine neuron responses.

Keras, a leading deep learning library, is introduced for practical application.

You’ll follow a workflow to implement neural networks, engage in activities, and have the chance to experiment with regression models and image loading.

The course then delves into the intricacies of training neural networks, discussing optimizers, momentum, data shuffling, and transforms.

You’ll learn how to fine-tune your network’s learning process and apply these techniques in a hands-on Keras grid search activity.

CNNs are a focal point, especially for image-related tasks.

You’ll work with image datasets, understand convolution mechanics, and experiment with settings like padding and stride.

The course includes a CNN activity to solidify your knowledge.

Transfer learning is demystified, showing you how to leverage pre-trained networks to accelerate your own models.

You’ll examine renowned architectures like LeNet and ResNet and learn about regularization to prevent overfitting.

RNNs, including LSTM and GRUs, are covered for their ability to handle sequential data like text.

You’ll get to grips with word embeddings and participate in RNN activities to apply what you’ve learned.

Autoencoders and their ability to compress and reconstruct data are explained, along with an introduction to variational autoencoders and GANs, which are pivotal for generating new, realistic data.

The course culminates with reinforcement learning, where you’ll teach networks to make decisions through trial and error.

A final project and activity will showcase your newfound skills in this exciting AI domain.

Throughout the course, you’ll have access to a wealth of resources, including notebooks, demo activities, and readings, ensuring you understand each concept thoroughly.

Cutting-edge topics like explainable AI and GPU utilization with Keras are also included, keeping you at the forefront of AI technology.

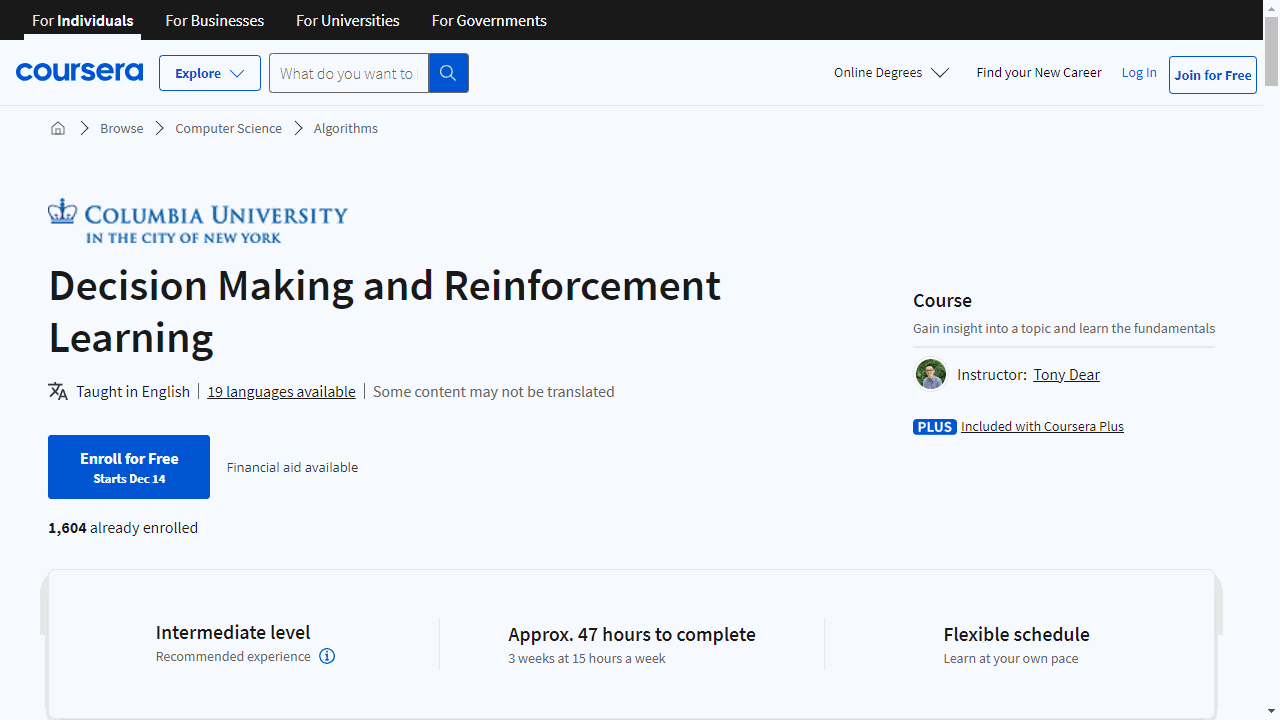

Decision Making and Reinforcement Learning

Provider: Columbia University

This course is designed to give you a solid foundation in how machines make intelligent decisions.

You’ll start by learning about utility theory, which is key to understanding how to evaluate different actions based on their usefulness.

This sets the stage for more advanced topics, as you’ll see how to apply this theory to real-world decision-making scenarios.

Next, you’ll tackle the multi-armed bandit problem, which is a classic challenge in reinforcement learning.

You’ll learn strategies like Ɛ-greedy action selection and the upper confidence bound, which are essential for helping machines learn from trial and error.

The course then guides you through the Markov Decision Process framework, a cornerstone of reinforcement learning.

You’ll get hands-on experience with gridworld examples, which simplify complex concepts into understandable challenges.

You’ll also master policies, value functions, and the Bellman Optimality Equations, which are crucial for strategic, long-term decision-making.

As you progress, you’ll explore partial observability and POMDPs, learning to make informed decisions with incomplete information.

This is a vital skill, as it mirrors the kind of decision-making we often face in real life.

In the latter part of the course, you’ll delve into Monte Carlo methods, temporal difference learning, and n-step methods.

These techniques are all about learning from experience, and you’ll see them in action through practical examples and games like Tic-Tac-Toe.

By the end of the course, you’ll have a deep understanding of key reinforcement learning strategies, including SARSA and Q-Learning.

You’ll also learn about function approximation for handling large datasets.

Throughout your learning journey, you’ll engage in discussions, receive feedback, and be part of a community that values academic honesty and respectful communication.

Also check our posts on: