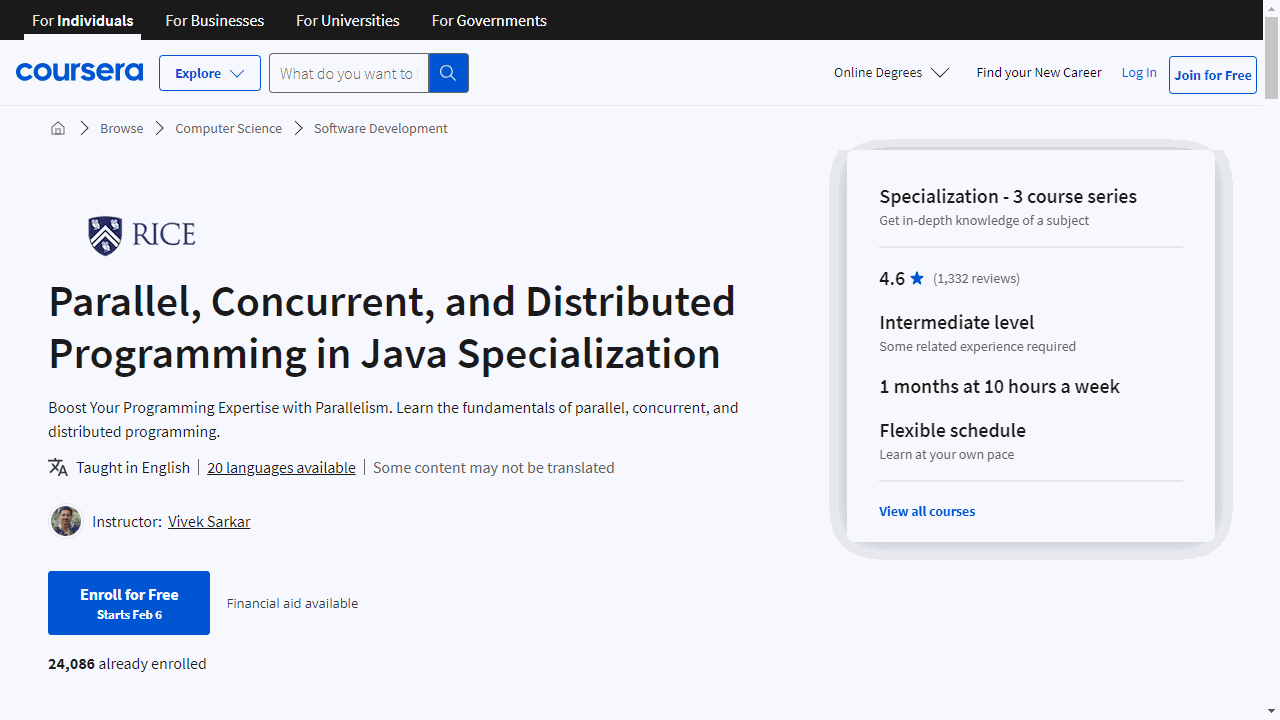

Parallel, Concurrent, and Distributed Programming in Java Specialization

Provider: Rice University

Tailored for both students and industry professionals, this specialization equips you with the skills to excel in Java programming across multicore and distributed systems.

Let’s break down what each course offers and how it can benefit you.

“Parallel Programming in Java” is your gateway to mastering multicore programming.

It teaches you to leverage Java 8’s parallel frameworks, such as ForkJoin and Stream, to speed up applications.

You’ll delve into parallelism theories, tackle data races, and explore determinism.

Practical mini-projects solidify your learning, preparing you to apply these concepts in real-world scenarios.

Moving on, “Concurrent Programming in Java” focuses on the efficient management of shared resources.

It demystifies the use of threads, locks, and atomic variables in Java 8, grounding you in the theoretical aspects of concurrency to avoid common errors.

This course is crucial for writing robust concurrent Java programs.

Lastly, “Distributed Programming in Java” teaches you to enhance application throughput and reduce latency by utilizing multiple data center nodes.

You’ll gain hands-on experience with frameworks like Hadoop and Spark and learn to blend multicore with distributed parallelism effectively.

This course is ideal for scaling Java applications across servers.

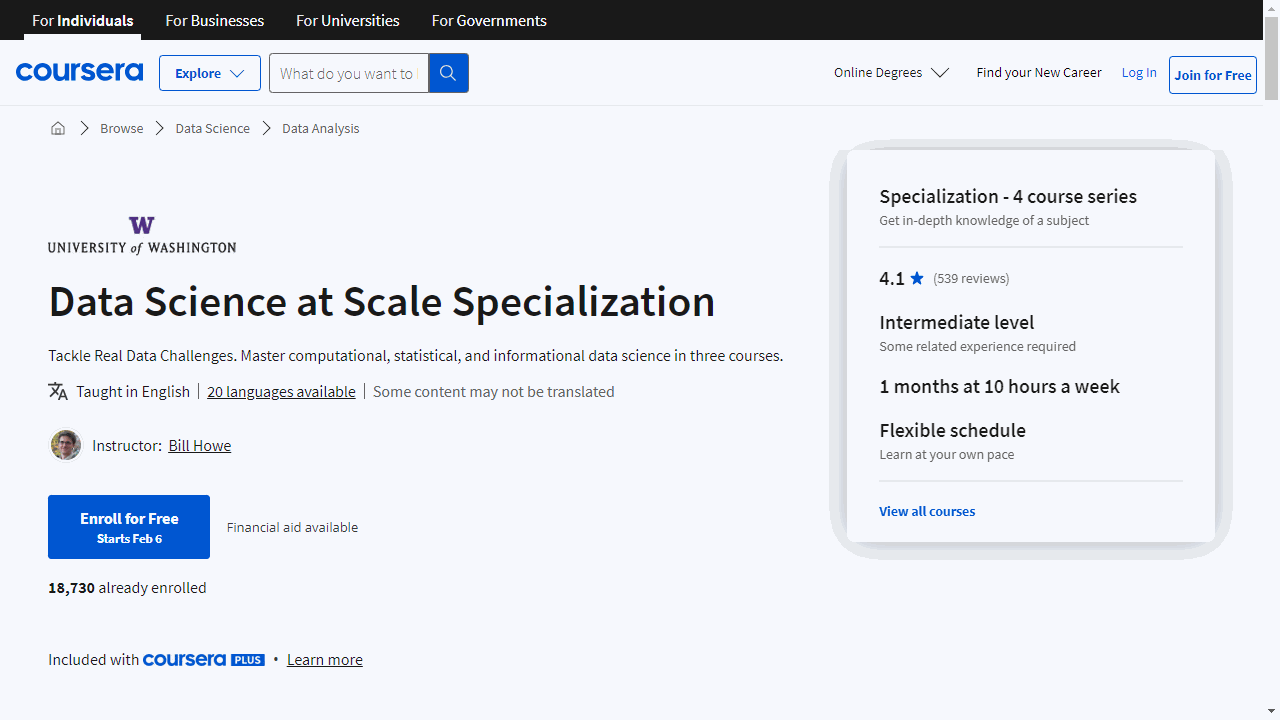

Data Science at Scale Specialization

Provider: University of Washington

This series equips you with essential skills to manage and analyze big data effectively.

“Data Manipulation at Scale: Systems and Algorithms” introduces you to the critical computing resources and programming concepts needed for large-scale data analysis.

You’ll explore cloud computing, SQL and NoSQL databases, MapReduce, and Spark, learning how to navigate data science projects and write efficient Spark programs.

This course is ideal if you aim to understand and work with large datasets.

In “Practical Predictive Analytics: Models and Methods,” you’ll dive into statistical experiment design and analytics.

This course sharpens your ability to design experiments, analyze results, and apply machine learning methods, including random forests and gradient descent.

It’s perfect for enhancing your predictive analytics skills with practical applications.

“Communicating Data Science Results” focuses on the crucial skills of visualization and ethical considerations in data science.

You’ll learn to create impactful visualizations and understand the ethical implications of big data, ensuring responsible communication of your findings.

Additionally, this course covers using cloud computing for reproducible analysis of large datasets, a must-have skill for impactful data science projects.

The “Data Science at Scale - Capstone Project” brings your learning full circle.

You’ll tackle a real-world project, applying your skills in data preparation, model construction, and result evaluation.

This capstone project offers a hands-on opportunity to apply your knowledge in a practical setting, preparing you for real-world data science challenges.

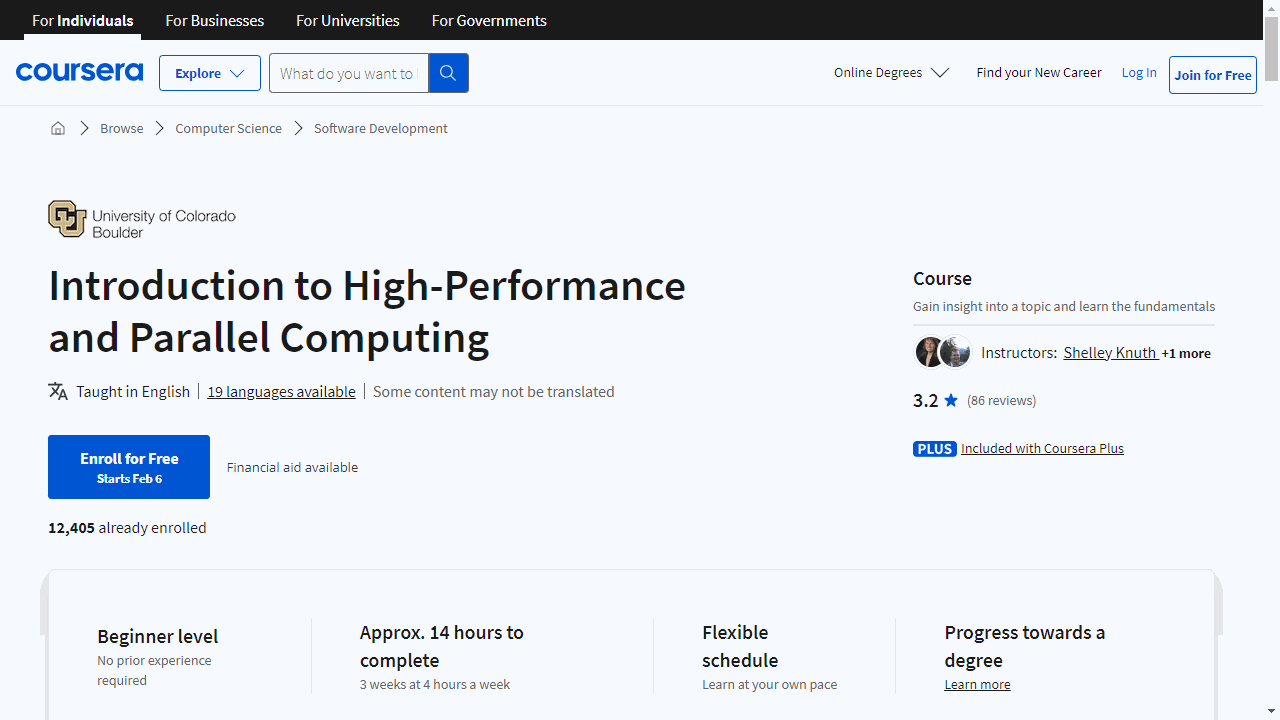

Introduction to High-Performance and Parallel Computing

Provider: University of Colorado Boulder

This course prepares you for the complexities of high-performance computing (HPC) and parallel computing, essential for solving advanced computing challenges efficiently.

Starting with a practical tour of JupyterLab and guiding you through assignment submissions, the course sets a strong foundation.

It then progresses to in-depth Linux training, split into two segments, to equip you with vital skills for any HPC endeavor.

Accessing remote systems and understanding filesystems are also covered, ensuring you’re well-versed in managing data across distributed networks.

A significant portion of the course is dedicated to Bash scripting, taught in three comprehensive parts.

This skill is indispensable for automating tasks and streamlining system management.

Moreover, the course emphasizes community engagement, encouraging you to introduce yourself, seek technical help, and participate in discussions, fostering a supportive learning environment.

The curriculum delves into the heart of high-performance computing, starting with HPC architecture and exploring software, node types, and resource management.

You’ll become proficient in job submission using Slurm, a crucial skill for distributed systems operations.

The course offers practical experience in job submission, ensuring you gain confidence in these essential tasks.

Key to this course is the exploration of serial versus parallel processing, parallel memory models, and the distinctions between data and task parallelism.

It also covers high throughput computing, guiding you through the nuances of code parallelization to enhance application performance and efficiency.

A standout feature of this course is its focus on scalability, speedup, and parallel efficiency.

You’ll explore the boundaries of scaling through strong and weak scaling studies, gaining insights into effective application scaling.

This knowledge is critical for anyone aiming to excel in environments where maximizing performance and efficiency is paramount.

By focusing on key skills such as Linux, Bash scripting, and Slurm job submission, and diving deep into scalability and efficiency, this course equips you to tackle the challenges of distributed systems head-on.