To put it simply, feature scaling is not required for logistic regression, but it can be beneficial in a number of scenarios.

It helps improve the convergence of gradient-based optimization algorithms and ensures that regularization techniques, like L1 and L2, are applied uniformly across all features.

Another advantage to scaling is that it can help with the interpretation of the model coefficients.

In this tutorial, we will explore the impact of feature scaling on logistic regression’s performance using the Red Wine dataset as an example.

When Do Logistic Regression Models Require Feature Scaling

Although Logistic Regression does not rely on distance calculations like KNN, there are certain scenarios when feature scaling can make a big difference in the performance of the model.

When using gradient-based optimization algorithms like Gradient Descent, Stochastic Gradient Descent, or Mini-Batch Gradient Descent, feature scaling can help in speeding up the convergence.

The algorithms work by iteratively updating the model parameters in small steps, nudging them in the direction that minimizes the prediction error.

Sometimes your model won’t converge at all if you don’t scale your features.

This is because the gradient descent algorithm will be jumping around the parameter space, heavily influenced by the features with the largest ranges.

In cases where the features are already on a similar scale or when using optimization algorithms that do not rely on gradients, feature scaling might not have a significant impact on logistic regression’s performance.

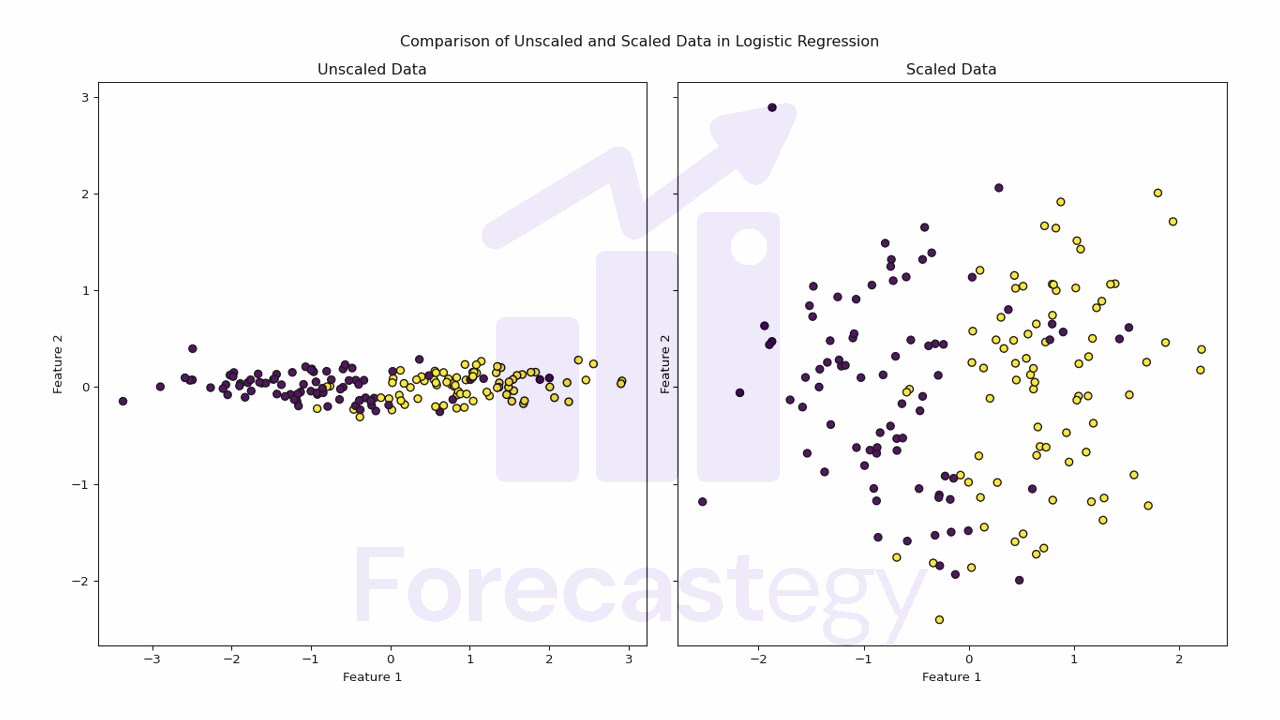

Logistic Regression Performance With and Without Feature Scaling

In this section, we will compare the performance of logistic regression with and without feature scaling using StandardScaler.

Additionally, we will investigate the feature scaling effect when using logistic regression with the liblinear (Newton method) and Stochastic Gradient Descent (SGD) solvers.

We will use the Red Wine Quality dataset from the UCI Machine Learning Repository.

import pandas as pd

url = "https://archive.ics.uci.edu/ml/machine-learning-databases/wine-quality/winequality-red.csv"

wine_data = pd.read_csv(url, sep=";")

wine_data['quality'] = wine_data['quality'].apply(lambda x: 1 if x >= 6 else 0)

| fixed acidity | volatile acidity | citric acid | residual sugar | chlorides | free sulfur dioxide | total sulfur dioxide | density | pH | sulphates | alcohol | quality |

|---|---|---|---|---|---|---|---|---|---|---|---|

| 7.4 | 0.7 | 0 | 1.9 | 0.076 | 11 | 34 | 0.9978 | 3.51 | 0.56 | 9.4 | 5 |

| 7.8 | 0.88 | 0 | 2.6 | 0.098 | 25 | 67 | 0.9968 | 3.2 | 0.68 | 9.8 | 5 |

| 7.8 | 0.76 | 0.04 | 2.3 | 0.092 | 15 | 54 | 0.997 | 3.26 | 0.65 | 9.8 | 5 |

| 11.2 | 0.28 | 0.56 | 1.9 | 0.075 | 17 | 60 | 0.998 | 3.16 | 0.58 | 9.8 | 6 |

| 7.4 | 0.7 | 0 | 1.9 | 0.076 | 11 | 34 | 0.9978 | 3.51 | 0.56 | 9.4 | 5 |

We split the dataset into features and labels, and then split the data into training and test sets.

from sklearn.model_selection import train_test_split

X = wine_data.drop('quality', axis=1)

y = wine_data['quality']

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42)

Let’s see how feature scaling affects logistic regression when using the Stochastic Gradient Descent (SGD) optimizer.

from sklearn.linear_model import SGDClassifier

from sklearn.metrics import accuracy_score

sgd = SGDClassifier(random_state=42)

sgd.fit(X_train, y_train)

y_pred = sgd.predict(X_test)

accuracy = accuracy_score(y_test, y_pred)

print("SGD Accuracy without scaling:", accuracy)

A random_state of 42 is set for reproducibility

The accuracy of the logistic regression model with the SGD optimizer without feature scaling is 45.83%.

Let’s see how it changes when we scale the features with StandardScaler.

from sklearn.preprocessing import StandardScaler

standard_scaler = StandardScaler()

X_train_standard = standard_scaler.fit_transform(X_train)

X_test_standard = standard_scaler.transform(X_test)

sgd_standard = SGDClassifier(random_state=42)

sgd_standard.fit(X_train_standard, y_train)

y_pred_standard = sgd_standard.predict(X_test_standard)

accuracy_standard = accuracy_score(y_test, y_pred_standard)

print("SGD Accuracy with Standard scaling:", accuracy_standard)

Running the code above gives us an accuracy of 52.92%, which is a significant improvement over the previous model.

Looking at the Red Wine Quality dataset, we can observe that several features have different scales.

Features such as “free sulfur dioxide” and “total sulfur dioxide” have significantly larger ranges compared to features like “chlorides” and “sulphates.”

Standardization rescales the features to have a mean of 0 and a standard deviation of 1, which helps ensure that all features contribute equally to the model.

As a result, logistic regression can more effectively learn the relationships between the features and the target variable.

SGD is not the only optimizer that we can use to train a logistic regression model.

It’s an online learning algorithm, which means it processes training data one instance at a time or in small batches.

This makes it suitable for handling large datasets or streaming data, as it doesn’t require loading the entire dataset into memory at once.

On the other hand, liblinear, which is based on the Newton method, requires the entire dataset in memory for computation and the calculation of the Hessian matrix, which is computationally expensive.

Let’s see how feature scaling affects logistic regression when using the liblinear solver.

First, we’ll train the model without feature scaling.

from sklearn.linear_model import LogisticRegression

log_reg = LogisticRegression(solver='liblinear', random_state=42)

log_reg.fit(X_train, y_train)

y_pred = log_reg.predict(X_test)

accuracy = accuracy_score(y_test, y_pred)

print("Accuracy without scaling:", accuracy)

The accuracy of the logistic regression model with the liblinear solver without feature scaling is 56.25%.

It’s already better than the SGD model in this dataset, but let’s see how it changes when we scale the features with StandardScaler.

log_reg_standard = LogisticRegression(solver='liblinear', random_state=42)

log_reg_standard.fit(X_train_standard, y_train)

y_pred_standard = log_reg_standard.predict(X_test_standard)

accuracy_standard = accuracy_score(y_test, y_pred_standard)

print("Accuracy with Standard scaling:", accuracy_standard)

The accuracy of the logistic regression model with the liblinear solver with feature scaling is 56.87% which is a slight improvement over the previous model.

See how the effect is much less pronounced in this case compared to the SGD model?

Liblinear can handle data with different scales without too much fuss.

However, it’s still a good idea to try scaling your features, as it might slightly improve your model’s performance.

Logistic Regression Regularization With and Without Feature Scaling

When applying regularization techniques like L1 (Lasso) or L2 (Ridge) regularization, feature scaling ensures that the penalty is applied uniformly across all features.

Regularization introduces a penalty term to the loss function, which helps prevent overfitting by reducing the complexity of the model.

Let’s use our logistic regression model with the liblinear solver to see how regularization affects the model’s performance.

First, without feature scaling.

log_reg_l2 = LogisticRegression(solver='liblinear', penalty='l2', C=0.1, random_state=42)

log_reg_l2.fit(X_train, y_train)

y_pred_l2 = log_reg_l2.predict(X_test)

accuracy_l2 = accuracy_score(y_test, y_pred_l2)

print("Accuracy without scaling:", accuracy_l2)

The accuracy of this model, with L2 regularization, is 53.3%.

Although lower than the model without regularization, let’s keep this number as a reference to compare the results of the next model.

log_reg_l2_standard = LogisticRegression(solver='liblinear', penalty='l2', C=0.1, random_state=42)

log_reg_l2_standard.fit(X_train_standard, y_train)

y_pred_l2_standard = log_reg_l2_standard.predict(X_test_standard)

accuracy_l2_standard = accuracy_score(y_test, y_pred_l2_standard)

print("Accuracy with Standard scaling:", accuracy_l2_standard)

Keeping the same regularization strength, the accuracy of this model, with L2 regularization and feature scaling, is 56.25%.

So we see that the difference in score after scaling, when using regularization, is more pronounced than when not using it.

Check how feature scaling affects SVMs next.